We Don’t Know Deployment: A 4-Step Remedy

So here’s my problem.

We dont know deployment. We work from same copy on one test server through ftp and then upload live on FTP.We have some small projects and some big collaborative projects.

We host all these projects on our local shared computer which we call test server.

All guys take code from it and return it there. We show our work to clients on that machine and then upload that work to live ftp.Do you think this is a good scenario or do we make this machine a dev server and introduce a staging server for some projects as well?

I wrote him a reply with some suggestions (and my consulting rate) attached, and we had a little email exchange about some improvements that could fit in with the existing setup, both of the hardware and of the team skills. Then I started to think … he probably isn’t the only person who is wondering if there’s a better way. So here’s my advice, now with pictures!

Starting Point

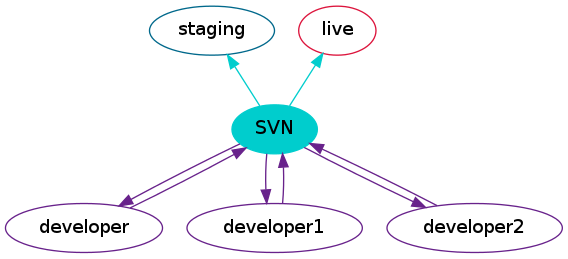

This is basically the place we begin from:

The code lives on this staging server, when you need to make a change, you copy it onto your own machine, make the change, and put it back on the staging server. From here, it can be checked over before it is put onto the live machine over FTP.

The first thing to say is: this does work, as long as you follow the process exactly. But I was asked if I thought there was a better way, and I think there is. When I work with a setup like this, there is always a moment where I have no idea if I just copied over something, or forgot to copy over something, or … you get the picture.

Step 1: Source Control

Use source control. That’s the best advice I can give anyone. Use a hosted solution, install your own, pick Subversion or Git or Mercurial, I really don’t care. But use source control.

Here’s the diagram with the source control in place, using SVN as an example (but it makes little difference what kind of source control you pick)

Hardly anything has moved, but we’ve added a piece to the middle of the puzzle. At this point, there’s a central repository where things are always kept, and always backed up. We have a history of changes, and can see who changed what. The developers can collaborate on larger changesets, and those changes can be committed to the repository even before they are ready to be placed onto the staging server, so you are much less likely to lose code when a hard drive dies.

To get the code from the repository to the staging and live servers, we can export a clean copy of code each time and upload that using FTP (or go one step further, SFTP). If you really must check out from source control repositories onto public servers, then make absolutely sure you are not serving the metadata that the source control product uses – since this is only step 1, that would be OK (but keep reading).

Step 2: Branches

Now we’ve gone to all the trouble of implementing a source control solution, we may as well make the most of the features that are now available to us. This means it is time to learn to branch. A branch is just another copy of the code, but isolated from the first one. Using branches means you can:

- always have an exact copy of the current live code available

- work on more than one feature (or bug fix) at once

You would use the same setup as above, but branching means you are able to do a great many more things without any fear of who changed what or whether you can commit without hurting things.

I could write another thousand words about branching, but I won’t (well, unless you ask very nicely, and even then it will be written another time). Instead, look around for some resources for whichever platform you chose: the Red Bean Book is a great place to start.

Step 3: Automated Deployment

This is the jewel in the crown, especially if you run multiple sites. If you only run one site, no matter how complicated it is, you will eventually learn to get the deployment mostly right, most of the time. With multiple sites, they will all have differences and they will take turns to bite you when you least expect it (or, more likely, on Friday afternoon when you are trying to do three other things before pub o’clock). Automated deployment means fast, repeatable, painless deployment every single time.

To deploy, you probably need some kind of build process. I’ve got it here as a separate piece of the system as it’s conceptually separate, but it could easily be as simple as a few scripts running on a spare box in the office (which might also run your SVN, for example), you don’t need anything fancy.

There’s the build server, right in the middle. When changes are available in the version control system, the build server will know about them. When you are ready, you ask the build server to deploy the relevant code to either live or staging, and it does that. Nobody else touches live. For any reason. If they do, their changes get lost on the next deploy – and anyway, automated deployment is so easy that there’s no reason not to use the process!

Exactly how the code gets deployed is up to you; personally though I like phing, which comes with lots of tasks for dealing with source control and PHP-specific tools built in. It’s relatively easy to get started with and you will set it up to follow the process which is currently documented on your company wiki (it is documented, right??). Mine goes something along the lines of:

- export files from source control

- compress files

- transfer files to server

- log into server, uncompress files

- deal with database changes, upload files, and anything else which needs special attention

- make the new code live by changing the symlink that your docroot points to

Yours may be a bit different, but this is all pretty straightforward to set up with phing, or you can write your own scripts if you prefer.

Step 4: For Bonus Points

By the time you’ve got source control and a build step set up, you have the basis for all of the cool tools – so try them out! Your “build server” can run other scripts as well, which makes it really a continuous integration server. You can get it to respond to changes in your version control and perhaps run some tests or generate the API documentation. You can add all kinds of preparation into the deployment steps, minifying JavaScript or CSS, stopping and starting worker processes; anything you can imagine!

Better than FTP

I’m not advocating using cool tools just for the hype; the recommendations here are the ones I use to protect myself from my own mistakes, hardware failure, projects with changing requirements, bosses with short memory spans, and all the other things that are part of everyday work. With branches in version control and some automated deployment, I can create and publish multiple changes in little more time than it takes me to write the actual code – which is really what I’m interested in!

Does this resemble your setup? Would you have given the same advice if you received this email? Let me know in the comments! (I’m sending a link to my original enquirer so it would be useful for him to hear your thoughts!)

Thank you for your valuable information. Am really in that stage as you titled the post.

I wouldn’t recommend SVN any more (it had it’s time, but it passed already). I agree that any source control is a great step forward, actually it was something that helped us to move forward to developing bigger apps in my first company. But after some time with SVN you’ll hear about distributed source control systems and about everything it can do. And you’ll want it (your dev definitely would). So you’ll waste time to migrate stuff, and to learn different approach.

So I would recommend to use best SC of available. From all, I’m familiar only with SVN and GIT. And with the former one one can do more.

Greg: the learning curve on those distributed systems makes it hard for people to begin. For me, if you’re not using source control in 2012, especially in an entirely inexperienced team, then subversion is a much more approachable place to start and it’s more important to have *something* than it is to use the new shiny. I’m still recommending subversion to the majority of organisations: many are co-located and have windows users, junior developers or designers who need to be able to work with the repository. If there’s anyone around who can help you with git/hg (and you’re right, they’re have many more features) then definitely go straight there, but if not … start easy.

Lorna,

Have you looked in depth at mercurial? I personally feel it has the power of git, and the ease of subversion.

I can see git has a steeper learning curve, but I didn’t feel that as much with hg.

Evert

Evert, I have but I’m glad to see another vote in its favour! All my own projects are on bitbucket, using mercurial, but it does still seem to be a bit of a minority. Mercurial for me is more humane and much easier to find your way around, I’m not sure if that’s influenced by the subversion skills I had before I moved to working with distributed systems though.

Evert, Totally agree. I got converted to Mercurial recently and to me, it simply makes sense.

Evert, I’m with you as well. Mercurial is great and I find it easily as user-friendly as subversion. hginit.com is a great tutorial for people new to Mercurial or coming from SVN.

As to the Mercurial vs. Git debate, someone explained it to me as “Git is written for programmers, Mercurial is written for people”.

Yes, the learning curve is higher. However, starting with a distributed CVS like git will prevent bad habits from forming in the first place. Come on, we are talking about programmers here. Not quite the dullest tools in the box. I think they can handle it.

It isn’t always programmers who are version controlling their work. Yes the majority of people who version control are programmers, but you need to consider the people programmers interact with day in day out who also would benefit from version controlling.

Like you said in the first paragraph and what you hinted at with this reply; If you are looking to re-vamp your dev-ops and methodologies and your team as a whole isn’t already using a vcs you really should ask around and either assemble a collection of experts of different topics from within your team or hire a consultant who can shed some experience on the subject. Have time set aside for collaboration in determining what fits your situation and team along with time for introduction and hands-on familiarity with the new technologies and implementing the new workflow.

Hiring a consultant may be seen as an unwarranted expense, but issues arising from problems in your dev-ops infrastructure will cause a lot more trouble than the small fee for a consultant.

Enjoyed this post, and I would love to read more on branching – but even more I’d love to hear your views on database deployment!

Steve

I’ll see what I can do :)

I second this, would like to hear your thoughts on using deltas or something else to manage DB changes

A more difficult use case is for teams working with CMSs or other projects where configuration is stored both in code and (unfortunately) the database. I work with Drupal and this makes deployment a real problem – exportables do exist for many things but by no means all.

Any suggestions for managing the process when you need to share a database? Or is this just an inherently ugly scenario?

Great article, deployments can too easily go wrong without automation!

If you’re using a language that doesn’t require a build (python, PHP, node.js), you can take things one step further and make each server a repository (or working copy in SVN’s case) which pulls code directly from source control.

This adds a bit more speed and efficiency as only the changed code gets transferred. It also gives you easy rollback facilities.

Pingback: Lorna Mitchell’s Blog: We Don’t Know Deployment: A 4-Step Remedy | Scripting4You Blog

I use phing for code deployment, typically with liberal use of the “scp” task. I like the idea of uploading the files and just switching a symlink (potentially making the change reversible), and I think I’ll try that in my next project.

Pingback: Taking on a Database Change Process | LornaJane

Pretty much what I have been pushing at, although for non-web systems the developer’s workstation may be good enough as a test environment not to need a complete statging environment (and yes, databases are a pain with this kind of infrastructure).

A key advantage of automating the push from VCS to server is that the server always has something that exists in VCS, not some messy mixture of versions and a few not-checked-in files you loose at a critical moment. The next step is to use the server’s package manager to deliver the files onto the server, so it’s a one-command operation to deploy there (if your package is smart enough). This matters more if your eventual depolyment is to multiple servers of course.

This is also where a centralised VCS does have advantages – anything comitted has to go through there, and so is captured. With a distributed set-up, there is the risk that someone figures out how to push from their workstation straight to production, then both sets of data go AWOL ….

Well done Lorna. Awesome article.

Do you have a recommendation for a build server to use with PHP projects?

I have been using Jenkins for the most part, and I find it fits really well